NuGet 3 tool, as it is expected from a package manager, by itself built using packages. These packages are published on the NuGet.org gallery and can be used by any applications required NuGet-like features. Usage scenarios include plugins, packaged as .nupkg, application content, package-based installers and others. Several projects, like Chocolatey or Wyam, already integrate NuGet for the different proposes, however for the really wide adoption of the NuGet libraries, a better API documentation is required.

This post demonstrates one of the ways of incorporating the NuGet libraries in an application. Dave Glick, an author of Wyam’s, has a great introduction to NuGet v3 APIs and I recommend to read his posts before continuing, however it is not required. The NuGet usage approach described in this post is different from the approach reviewed in the mentioned articles. When applied, it allows to create .NET Standard compatible libraries and incorporate the NuGet tooling not only in .NET Framework applications, but also in .NET Core solutions.

NuGet 3 uses a zillion of libraries. Unlike NuGet 2, composed from just a few libraries, NuGet 3 design is based on multiple small libraries. For example, the post’s sample code uses nine libraries. Another note about the API – it is still in development. Post is based on version 3.5.0-rc1-final of NuGet and before the release some APIs may change.

Top level NuGet libraries used by the solutions are NuGet.DependencyResolver and NuGet.Protocol.Core.v3.

Workflow

The logical workflow is similar to NuGet restore command and from the developer perspective it includes the following phases:

- Prepare package sources

- Identify a list of packages to install. This is a list of top level packages, requested by user or application.

- Request NuGet to discover dependencies for the targeted packages

- Install the target packages and recursively install dependencies with help of NuGet

Main concepts

- PackageSource identifies packages feed. NuGet understands local (file system-based) and remote package sources.

- SourceRepository is a combination of PackageSource and various services to retrieve specific resources (metadata, dependencies, etc.)

- Context usually plays a role of operation’s configuration and operation’s cache. Examples are SourceCacheContext, RemoteWalkContext

- RemoteDependencyWalker discovers all dependencies for the provided package

- LibraryRange uniquely identifies a library (package) with Name, VersionRange and LibraryDependencyTarget, which is the library type (Package, Project, etc.). Library type plays an important role in the way the dependencies are resolved in the sample code.

- LibraryDependency is a list of dependencies for the concrete library

- IProjectDependencyProvider is a special library provider which allows to submit custom libraries in RemoteDependencyWalker and makes the whole workflow possible.

Prepare package sources

The following code adds the official NuGet feed as the package source and registers the sources in the RemoteDependencyWalker’s context.

var resourceProviders = new List>();

resourceProviders.AddRange(Repository.Provider.GetCoreV3());

var repositories = new List

{

new SourceRepository(new PackageSource("https://api.nuget.org/v3/index.json"), resourceProviders)

};

var cache = new SourceCacheContext();

var walkerContext = new RemoteWalkContext();

foreach (var sourceRepository in repositories)

{

var provider = new SourceRepositoryDependencyProvider(sourceRepository, _logger, cache, true);

walkerContext.RemoteLibraryProviders.Add(provider);

}

Identify a list of packages to install

RemoteDependencyWalker accepts only one root library to calculate the dependencies. In case of the multiple root target libraries, they should be wrapped inside of a fake library and IProjectDependencyProvider allows to include the fake library in the dependency resolution process.

IProjectDependencyProvider defines SupportsType method, which allows to control library types handled by the class and GetLibrary method which is expected to return the library object.

The trick is to define the fake library as a LibraryDependencyTarget.Project and only accept this type of libraries to be resolved by ProjectDependencyProvider. So, when RemoteDependencyWalker will ask for the instance of the fake library, it can be constructed with the list of targeted libraries as dependencies. For example, the following code assumes that two NuGet libs are the targeted libraries to install.

public Library GetLibrary(LibraryRange libraryRange, NuGetFramework targetFramework, string rootPath)

{

var dependencies = new List();

dependencies.AddRange( new []

{

new LibraryDependency

{

LibraryRange = new LibraryRange("NuGet.Protocol.Core.v3", VersionRange.Parse("3.0.0"), LibraryDependencyTarget.Package)

},

new LibraryDependency

{

LibraryRange = new LibraryRange("NuGet.DependencyResolver", VersionRange.Parse("3.0.0"), LibraryDependencyTarget.Package)

},

});

return new Library

{

LibraryRange = libraryRange,

Identity = new LibraryIdentity

{

Name = libraryRange.Name,

Version = NuGetVersion.Parse("1.0.0"),

Type = LibraryType.Project,

},

Dependencies = dependencies,

Resolved = true

};

}

Dependency discovery

When all preparations are done, RemoteDependencyWalker can start to discover the dependencies

walkerContext.ProjectLibraryProviders.Add(new ProjectLibraryProvider());

var fakeLib = new LibraryRange("FakeLib", VersionRange.Parse("1.0.0"), LibraryDependencyTarget.Project);

var frameworkVersion = FrameworkConstants.CommonFrameworks.Net461;

var walker = new RemoteDependencyWalker(walkerContext);

GraphNode result = await walker.WalkAsync(

fakeLib,

frameworkVersion,

frameworkVersion.GetShortFolderName(), RuntimeGraph.Empty, true);

foreach (var node in result.InnerNodes)

{

await InstallPackageDependencies(node);

}

The provided code does more than the dependencies discovery. It defines the supported .NET framework version and it iterates through the result to install the packages.

Installing the packages

And now application is ready to install the discovered packages

HashSet _installedPackages = new HashSet();

private async Task InstallPackageDependencies(GraphNode node)

{

foreach (var innerNode in node.InnerNodes)

{

if (!_installedPackages.Contains(innerNode.Key))

{

_installedPackages.Add(innerNode.Key);

await InstallPackage(innerNode.Item.Data.Match);

}

await InstallPackageDependencies(innerNode);

}

}

private async Task InstallPackage(RemoteMatch match)

{

var packageIdentity = new PackageIdentity(match.Library.Name, match.Library.Version);

var versionFolderPathContext = new VersionFolderPathContext(

packageIdentity,

@"D:\Temp\MyApp\",

_logger,

PackageSaveMode.Defaultv3,

XmlDocFileSaveMode.None);

await PackageExtractor.InstallFromSourceAsync(

stream => match.Provider.CopyToAsync(

match.Library,

stream,

CancellationToken.None),

versionFolderPathContext,

CancellationToken.None);

}

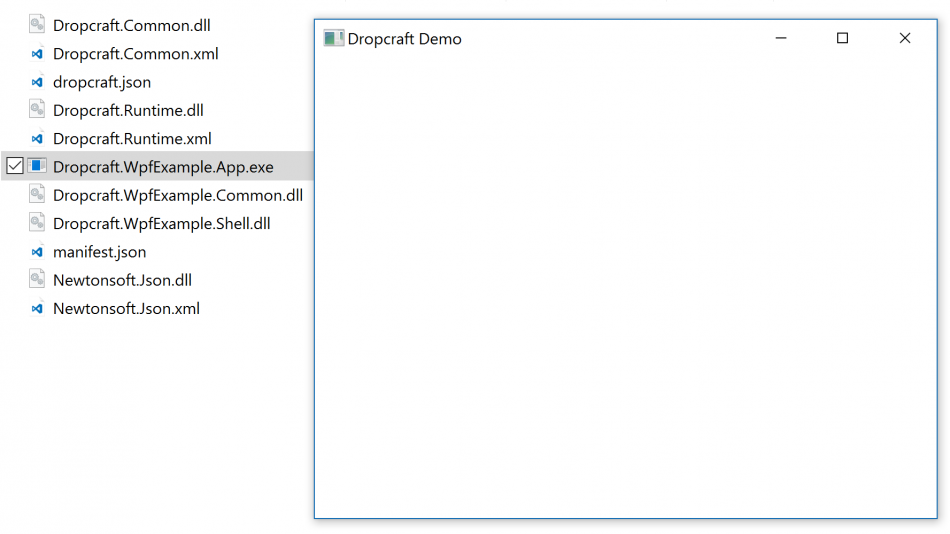

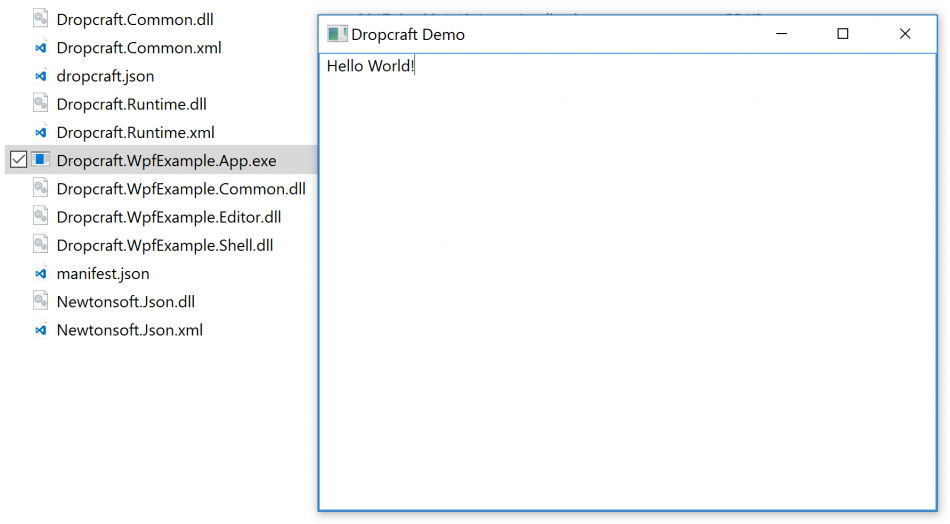

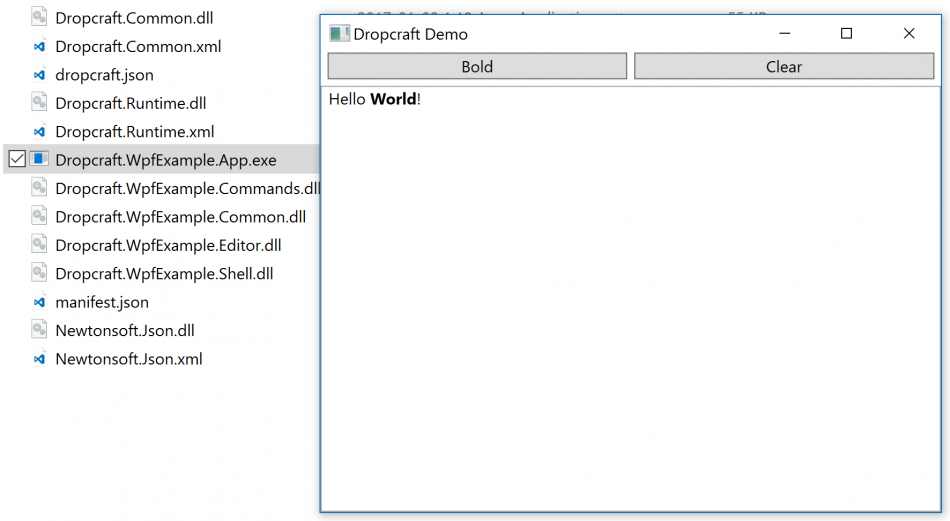

As the result of execution, all resolved packages will be de-duplicated and installed in D:\Temp\MyApp[package-name] subfolder. Each package subfolder includes .nupkg, .nuspec and libraries for all supported frameworks.

And that’s it. The provided code demonstrates the whole workflow. There are tons of small details hidden behind this simple demo, but it should be enough for staring your own experiments. Fill free to comment if you have any questions.